SEO Context

Google has been increasingly integrating AI features into their SERP results, especially since around March-April 2025. This has been leading to widespread reports of traffic decreases across the industry due to pushing organic links further down the page. While this understandably has been alarming, this doesn't necessarily mean that "SEO is Dead" as the popular mantra goes. This phenomenon is often referred to as "zero-click search" / source: Bain & Company.

How AI Search Has Shifted User Journey

Search behavior has shifted from clicking on the 10 blue links for all answers across the entire funnel to getting more answers from AI first then seeking out specific websites once the user is ready to do deeper research or ready to purchase. This shift in user behavior applies to both Google's AI Overviews / AI Mode and LLMs like ChatGPT.

While your traffic is down, it doesn't mean that less people are looking to buy or do business with you. It means the way that information is consumed has changed. The goal with AI Search is to get cited within the response. The resulting traffic in-theory should be more qualified as a result even if it gets attributed to Direct traffic or branded search later on though research by Amsive has shown that is not always the case.

The Importance of Retrievability

In absence of clicks and keyword rankings, how can you measure progress? One way to analyze your content is to measure it's retrievability. If your goal is to be more visible in Google AI responses then use Google's embedding models to analyze the semantic relevance of your content. If your goal is to be more visible on ChatGPT then use OpenAI's embedding models to analyze the semantic relevance of your content. By doing this you'll have insights into how these LLMs determine what content is relevant.

EmbeddingGemma is essentially a miniaturized version of Gemini, and Gemini is the AI powerhouse behind Google's advanced search capabilities. This isn't just another language model — it's a window into how Google's search infrastructure actually works.

Dan Petrovic

After Google released the EmbeddingGemma model on September 4th, 2025, I read Dan Petrovic's article "EmbeddingGemma: The Game-Changing Model Every SEO Professional Needs To Know" which gave me some ideas for how to put this to use. Dan is arguably the smartest working SEO today so when he says something I'm going to listen.

One idea I've been pursuing is building a custom AI search engine based on EmbeddingGemma and use it to analyze new content against existing search results before deciding to publish it. I actually got this idea recently after having a conversation with my colleague, Grant Simmons. I'll detail the process below.

Creating a Vector Database

I had previously created a Qdrant vector database to build a chatbot so I had the basic infrastructure in-place to build a custom search engine. The challenge was in getting content to "chunk" coherently for the embedding model to retrieve and display effectively. My initial efforts utilized a 500-character chunk limit + 50 character overlap for semantic continuity. This was the easiest to implement. However, arbitrary character counts results in embeddings that don't always make much sense semantically.

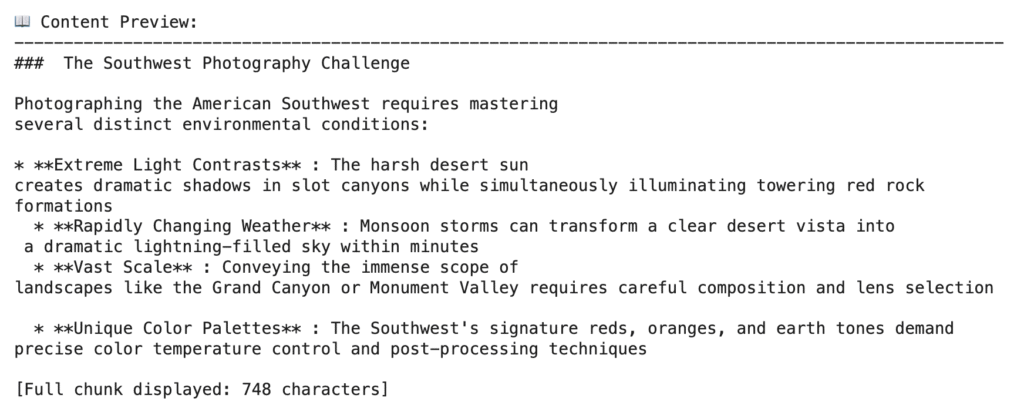

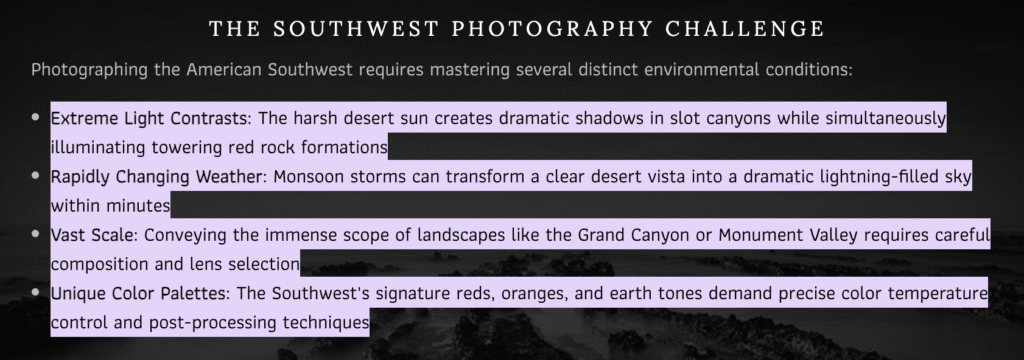

Layout-Aware Chunking

Layout-aware chunking uses HTML formatting to inform the chunk sizes. Headers are grouped with the nearest paragraph or block of content. Lists and tables are self-contained chunks. This approach made the most sense to me as it more naturally-reflects how content is written for websites. The only challenge is that embedding models don't process HTML; they need the text to be converted to a format that it can understand so I relied on Selenium, Beautiful Soup and HTML2Text Python libraries to pre-process the text for the EmbeddingGemma model. This took many revisions to dial in, and even then I'm not 100% happy with the execution but what I have is good enough to use.

Simulate Search Results With a Vector Database

The challenge with analyzing AI search results is that the responses are probabilistic rather than deterministic unlike traditional SEO results. This means that the response may slightly differ to drastically differ on any given session. The other complication is that Google AI uses a "Query Fan-Out" technique which means that the search term you enter may not be the only one used by Google to generate the results from.

With all of this in mind, what I set out to do here is create a tool that can analyze the AI Overview or AI Mode results by comparing the text against my vector database of content. The idea is that if we have the same corpus of content that Google is assessing for search and use the same embedding model that Google is using, we can see how our desired landing page scores against content from other pages on our site and relevant competitor pages. To get more diverse results, I'd recommend using a VPN and Incognito Mode for at least some of the searches so they're not biased by your own personal browsing habits.

Not sure why a competitor is showing up so much on Google AI Search compared to your content? If Google is searching for relevant content and not merely high authority domains, then score the semantic similarity of your content vs the rest of the content out there. What you may find is that pages you may expect to display may not even have the most relevant passages on your own site much less competitor sites.

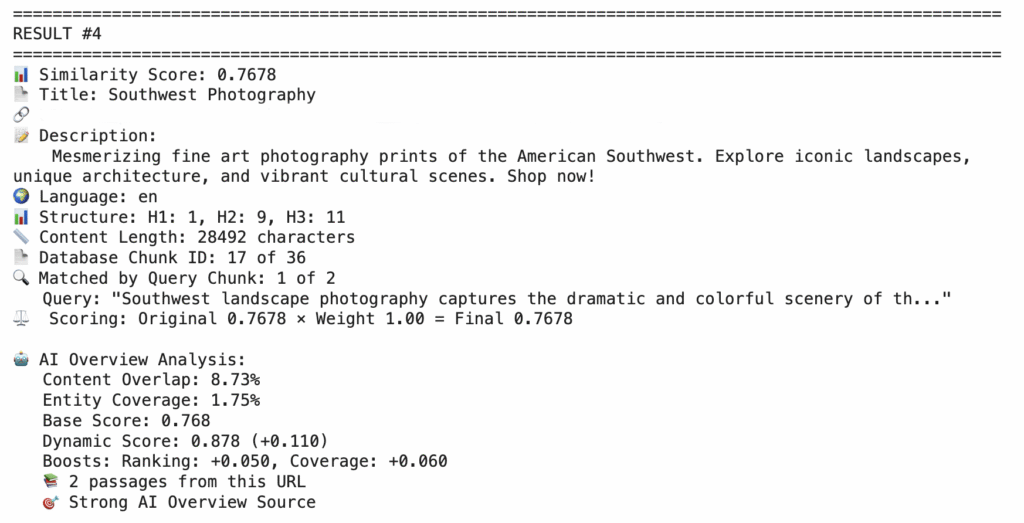

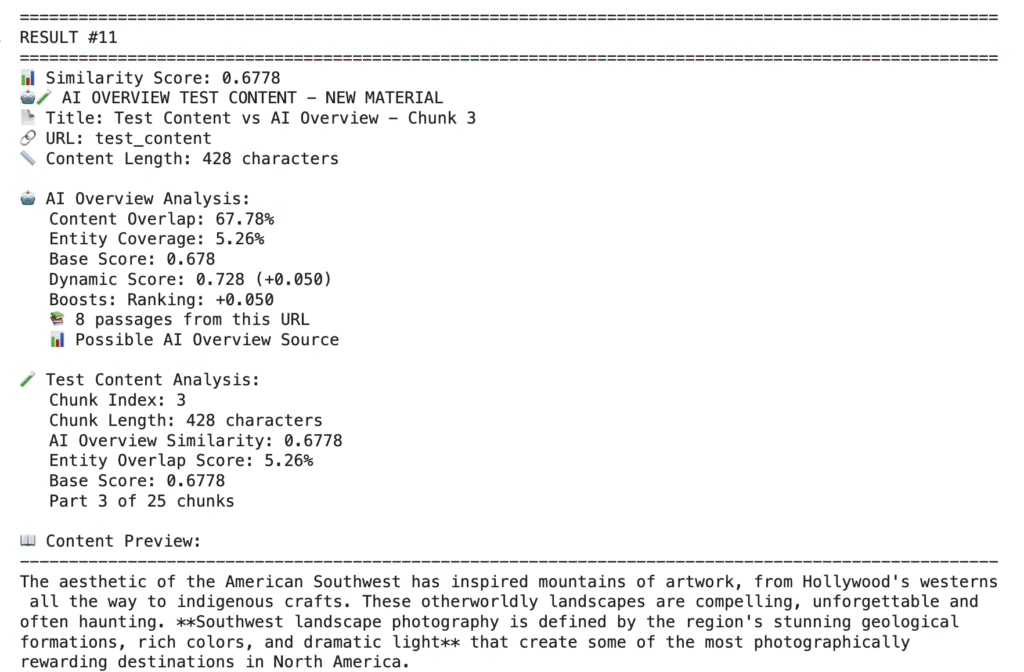

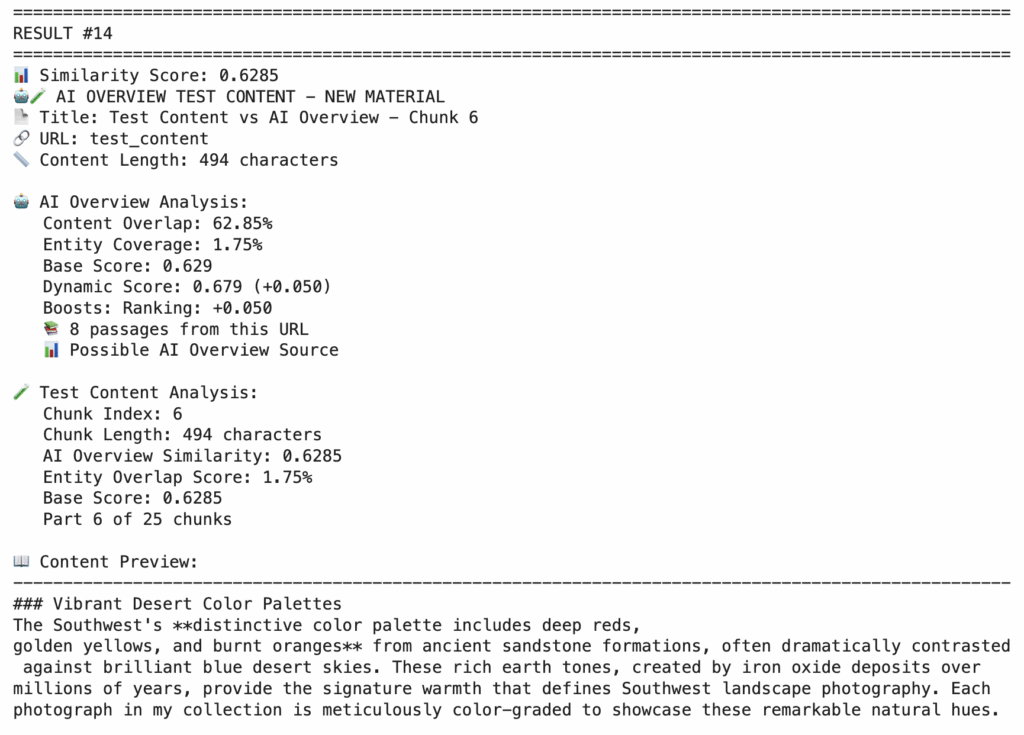

With my Google Colab script, I pasted in the AI Mode text then scored all of the content from my vector database against the search result. I added some additional scoring beyond just EmbeddingGemma cosine similarity scores to account for entity coverage and URLs with multiple ranking passages. The highest scoring content chunk on my own site also happened to be the "fraggle" that Google linked to from the AI search result. Does this validate my approach?

Test New Content Before Publishing It

Another benefit to having this vector database is that I can test new content before publishing it. If I'm going to update an existing page I would want it to potentially attract more visibility and not less. Previously we had to guess and use best practices then hope the results would play out in the following months.

Now, using the same search functionality that I created with the EmbeddingGemma model I can search the entire vector database to compare against new content before deciding to publish. For the sake of this test, what I did here was copy and paste the AI response and have the LLM update the existing content with it before uploading the new text as a markdown file to my Python script to chunk & generate embeddings within Google Colab. Intuitively I assumed that this would yield more semantically-relevant passages as this was borderline plagiarism. That actually didn't turn out to be true. The new content passages didn't make the top 10 results. Very interesting!

Key Learnings

- How you format text can make a major difference in how embedding models score your content.

- The length of your content passages also makes a difference.

- Too short of content and you may not have enough entities or context in there to score well semantically.

- Too much content and it can dilute the meaning.

The unknown factor here is how do LLMs chunk the content? Just because I format my content and chunk it semantically, doesn't mean that LLMs automatically do this all the time. In my test here, it was pretty close to the layout-aware chunking strategy however. My suggestion is to not over-think this stuff and just write naturally with formatting that is easy to digest.

I'll caveat this entire article with this: AI Search is a relatively new topic that has been much debated. There are a lot of people making outlandish claims and presenting their words as the absolute truth. The truth is that this is uncharted territory for everyone. I don't consider myself an expert in how LLMs work aside from having done a lot of semantic search analysis and extensive Python coding with each platform so these are just my own observations and ideas. I'm curious about how this works and I like to build stuff. If you see something interesting then give it a try and share your own observations.