Why GA4 Can't See What's Actually Driving Pipeline

The digital marketing funnel was always a useful fiction. Clicks flowed from search to content to consideration to conversion, each step left a trail in Google Analytics, and the dashboard told you roughly what happened. That model was never the full truth. Google's own “messy middle” research back in 2020 had already shown buyers looping unpredictably between exploration and evaluation for years. But the fiction was useful enough to build budgets and careers around.

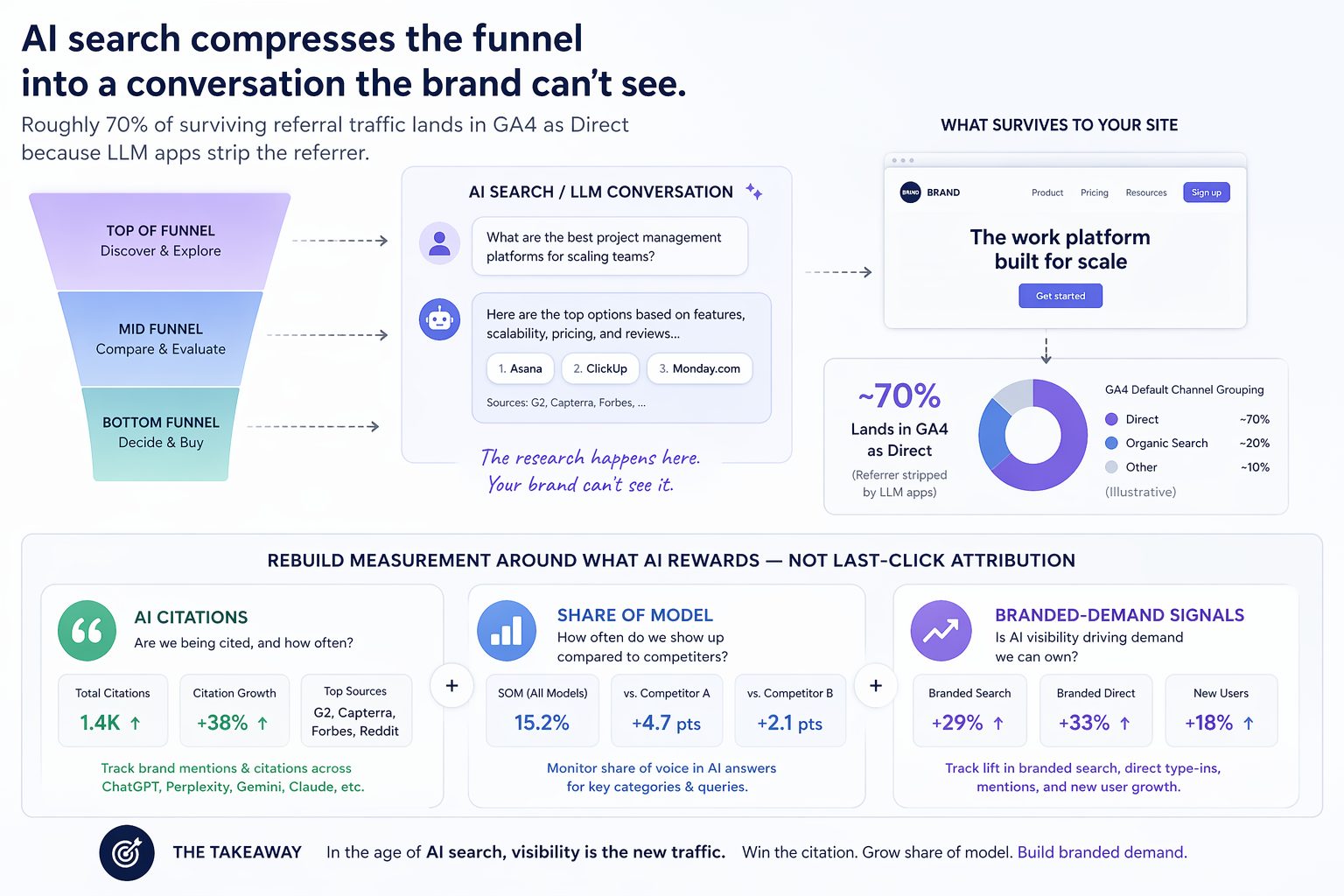

Generative AI search didn't just disturb the funnel. It compressed it. And the analytics stack most teams are still running hasn't caught up.

When someone opens ChatGPT, Perplexity, Gemini, or Google's AI Mode and types something like “which project management tool is best for a 40-person agency that needs client portals and integrates with HubSpot”, they do in one prompt what used to take a week of category research, three listicle articles, a G2 session, and half a dozen branded searches. Awareness, consideration, and shortlist formation now happen inside a conversation you can't see, can't measure, and can't easily influence after it's started.

Marketers are calling this funnel compression. The consequences run deeper than lost clicks.

What Does a Compressed Funnel Actually Mean?

Funnel compression is not the same thing as shorter sales cycles. The underlying psychology of buying hasn't changed. Buyers still need to recognize a problem, explore options, weigh trade-offs, and build confidence before they commit. What's changed is where those stages happen and how many of them a brand can observe.

Before AI search, a typical B2B research process produced 15 to 25 measurable touchpoints across several weeks. A Google search for “best CRM for financial advisors”. A click to a Capterra listing. Another to a comparison article on Forbes Advisor. A YouTube walkthrough. A webinar registration. A vendor blog post. A pricing page. A demo form. Each one was a pixel fire, a session in GA4, a row in the attribution model.

Post-AI, an LLM compresses most of that into a single response. The model already ingested the comparison article, the G2 review corpus, the Reddit thread on r/financialadvisors, and the YouTube transcript. It outputs a paragraph and a shortlist of three vendors. Forrester's 2024 Buyers' Journey Survey found that 89% of B2B buyers have adopted generative AI somewhere in the purchasing process, and their 2025 update confirmed genAI is now the single most cited meaningful interaction type for B2B research. Gartner has been saying for years that buyers spend only 17% of their evaluation time meeting with potential suppliers. What's different now is that the invisible portion is the majority of what actually shapes the decision.

| Pre-AI B2B Research (2018–2023) | Post-AI B2B Research (2024–Present) | |

|---|---|---|

| Measurable touchpoints | 15–25 sessions across several weeks | 1–3 touches, mostly bottom-of-funnel |

| Where research happens | Google + comparison sites + YouTube + review forums | Inside a single LLM response |

| Analytics visibility | ~90% of the journey is captured | Majority of the journey is invisible to the brand |

| Brand influence window | Top-, mid-, and bottom-funnel content all matter | Bottom-funnel validation only; shortlist already formed |

| What to measure | Clicks, sessions, assisted conversions | Citations, mentions, share of model, branded-demand lift |

The funnel still exists. It just runs inside somebody else's black box.

How Much Have Clicks Actually Dropped?

The data from 2025 into early 2026 is remarkably consistent across independent studies, which is unusual in SEO research where methodology differences normally produce conflicting results.

Seer Interactive tracked 3,119 informational queries across 42 client organizations from June 2024 through September 2025. Organic CTR on queries that triggered AI Overviews fell from 1.76% to 0.61%. That's a 61% drop. Paid CTR on the same queries fell from 19.7% to 6.34%. Ahrefs ran a parallel study on 300,000 keywords and concluded that as of December 2025, the presence of an AI Overview reduces organic CTR on position-one content by 58%. Pew Research Center approached it differently, tracking actual browsing from 900 U.S. adults across roughly 69,000 real searches. They found users click any link on a page containing an AI summary about 8% of the time, versus 15% on pages without one. The AI Overview's own cited source links get clicked roughly 1% of the time.

The second finding is worse. Queries without AI Overviews are also declining. Seer's non-AIO CTR dropped 41% year over year. AI Overviews are not the only thing stealing clicks. People have learned a new behavior: read the summary, don't click. Some of that demand has migrated to ChatGPT (now around 800 million weekly active users), Perplexity, Gemini, and social search on TikTok, Reddit, and YouTube.

The publisher damage has been brutal and specific. Digital Content Next, which represents around 40 premium publishers including The New York Times and Condé Nast, documented a median 10% year-over-year drop in Google referral traffic across members in mid-2025. Non-news content sites were down 14%. Chegg disclosed a 49% collapse in non-subscriber traffic between January 2024 and January 2025 and cited AI Overviews directly in its antitrust filing. Business Insider lost roughly 55% of its organic search traffic over three years and cut 21% of its staff in May 2025. DMG Media, which owns MailOnline and Metro, has reported CTR drops approaching 90% on certain queries in submissions to regulators. Stereogum, a music blog, lost 70% of its ad revenue in 2025 and its founder assigned most of the blame to AI Overviews on the record.

Google's public position is that aggregate click volume is “relatively stable” and click quality has “slightly increased”. Every major publisher consortium that has actually measured it has disputed that position. Honestly, I'll let the reader decide who's more credible when one party has the primary data and the other has a monopoly to defend.

Roughly 60% of Google searches now end with no external click, up from 58% a year earlier. For queries with AI Overviews, that rate is considerably higher.

Why Can't GA4 See AI Traffic?

If the remaining clicks were accurately tagged, marketers could at least adapt. The deeper problem is that a lot of the surviving traffic is invisible or misattributed.

Most LLM platforms, especially their mobile apps, strip the HTTP referrer header on outbound clicks. When a Perplexity user taps a citation on their phone and lands on your product page, GA4 receives a session with no referring URL. Per GA4's default processing rules, that session gets dumped into the “Direct” bucket alongside bookmarks and typed URLs. Loamly analyzed 446,405 visits and found that around 70% of AI-driven traffic currently lands as Direct in GA4. Google's own AI Mode had a confirmed referrer tracking bug that stripped attribution entirely until mid-2025, and even after the fix, AI Overview clicks still roll up into regular google / organic because Search Console offers no native way to isolate them.

The fact is that the second-order effects compound fast:

- Direct traffic inflates. The Direct channel grows 30 or 40% year over year with no brand campaign to explain it. Teams conclude their brand is getting stronger. In reality, a large share of that “direct” traffic is AI-influenced sessions the analytics platform couldn't classify.

- Organic search looks deflated. AI search is intercepting queries that would have previously fired as

google / organicsessions. Your organic traffic didn't actually drop 20% — some of it moved into a channel bucket that can't see it. - Paid ROAS drifts. Paid gets judged against an organic baseline that's being hollowed out by forces that don't appear in any channel report. Decisions to cut or scale paid get made on corrupted comparisons.

- Content ROI looks false. The blog post that fed the ChatGPT response that built the shortlist that produced the direct-traffic demo request will show zero attributed revenue in GA4. Budget decisions get made against that incomplete picture, and the first thing to get cut is usually the editorial content that was actually feeding the AI models.

Jasper's 2025 State of AI in Marketing survey of more than 500 marketers found that 51% of marketing teams cannot measure the ROI of their AI investments. Most of the profession is flying partially blind on what is simultaneously becoming the most important upstream channel for demand formation.

Here's the paradox. AI-sourced traffic that does make it through converts dramatically better than anything else in the mix. Across multiple independent studies, AI referral traffic converts at rates 4x to 14x higher than standard organic. The form builder Tally reported that ChatGPT became their #1 referral source in 2025. Users who show up from an AI citation have already been pre-qualified by the AI, already seen a comparison, and already decided the brand is worth a visit. They arrive to validate a decision, not to start making one. So even as total sessions drop, the quality of the remaining sessions spikes. The issue is that if 70% of that traffic is misattributed as Direct, the channel driving the highest-converting visitors in the entire stack also looks like the channel generating the least measurable revenue.

How Does the Compressed Decision Process Actually Work?

Step back from the dashboards and the shift stops looking mysterious. People haven't become worse shoppers. They've delegated the tedious parts of shopping.

The messy middle that Google's behavioral researchers documented in 2020 (the loops between exploration and evaluation, driven by biases like social proof, authority, and category heuristics) hasn't gone away. It's been outsourced. A prompt like “which noise-cancelling headphones under $300 have the best battery life for a daily commute” asks the AI to do what the user used to do themselves: read the reviews, weigh the specs, check the forums, produce a shortlist with reasoning. The cognitive biases haven't disappeared either. They've moved into the training data and the citation patterns of the models.

The resulting buyer path has a strange new shape. Top and middle of funnel compress into a single AI session the brand can't observe. Bottom of funnel expands in importance, because by the time a buyer lands on a vendor website, most of the decision is already made. They aren't there to learn. They're there to validate. They want to see pricing that matches what the AI quoted, a demo that matches what the AI described, and the absence of red flags (bad reviews on Trustpilot, security posture questions, slow support) that would knock the brand off the shortlist the AI handed them.

A concrete B2B example. An advisor evaluating CRM software in 2023 would run a search, click a Capterra page, click a comparison article on Forbes Advisor, visit three vendor websites, watch two YouTube demos, and maybe post in r/financialadvisors asking for real-world takes. Twenty sessions over a week and a half. The same advisor in 2026 asks Claude “what's the best CRM for a 3-advisor RIA that integrates with Orion and handles 401k rollover workflows”, reads the answer, maybe asks two follow-ups, and shows up on Redtail's pricing page the next day. Two or three measurable touches, all of them at the very bottom of the funnel. Redtail's marketing team sees a “direct” visit with high intent and no idea what drove it.

Marketplace sites see this pattern acutely. At Auction Technology Group I watched this shift start to play out across our sites including Proxibid, LiveAuctioneers, and The-Saleroom. A collector asking ChatGPT “what's a fair auction estimate for a vintage Omega Speedmaster Professional 145.022” gets a synthesized answer drawing from those marketplaces, Heritage Auctions, and a handful of specialist horology forums. The sources get cited at the bottom of the answer. The collector has the number they wanted and never clicks through to any of them. Auction houses that previously captured that query as a high-intent session now see a marginal lift in direct traffic to the item page, if the collector returns at all. Multiply that across every “what's it worth” and “where can I bid on X” query and the traffic impact on auction marketplaces is measured in double digits, even when the sites are still doing the work of answering the question for the entire category.

The business consequence is that demand is pre-formed before any measurable marketing event happens. B2B SaaS sales teams have been saying this openly since mid-2025: inbound leads arrive with narrower shortlists and stronger preferences than they did in 2023. Deals close faster but there's less opportunity to reshape the buyer's frame once they're in pipeline. Brands that were in the AI's answer at the moment of shortlisting win outsized share. Brands that weren't usually don't know they lost, because they were never in a measurable consideration set.

Brands that were in the AI's answer at the moment of shortlisting win outsized share. Brands that weren't usually don't know they lost.

How Do You Reconcile This Through Data?

Recovering measurement in a compressed, AI-mediated funnel is not a matter of installing one more pixel. It requires accepting that no single system will ever see the whole picture again, and then triangulating from a handful of imperfect signals. The emerging discipline has five anchors.

1. Track Upstream Visibility, Not Just Downstream Clicks

Because the decisive moment happens inside the AI response, the first thing to measure is whether the brand shows up there at all. The metrics that have crystallized in 2025 and 2026 include:

- Mention rate: how often the brand name appears in AI answers across a defined set of category prompts. If you sell marketing automation, run a panel of 30 to 50 prompts like “what are the top marketing automation platforms for B2B SaaS” or “best email automation tools for e-commerce under $200 per month” and track what percentage cite you.

- Citation rate: how often the AI specifically links to your own content as a source.

- Share of voice (sometimes called Share of Model): your mention rate as a percentage of category-level mentions, benchmarked against direct competitors. If ChatGPT answers 100 category prompts and names HubSpot 62 times and you 9 times, your share of voice is 9% and HubSpot's dominance is a real competitive problem you can put a number on.

- Sentiment and factual alignment: whether the AI describes you accurately and favorably. This one matters more than people realize, because LLMs get facts wrong. In my own brand monitoring I can see ChatGPT occasionally confusing my name with Raymond Wong, a famous film producer from Hong Kong. Not catastrophic, but an entity-disambiguation problem I can only fix once I can see it. A 50% mention rate means nothing if the AI is telling users your product is “overpriced and buggy”, or attributing the wrong body of work to you entirely.

- Query coverage: the breadth of topics where your brand surfaces at all. You might own “best marketing automation for enterprise” and be invisible on “marketing automation for agencies”. That gap is where a competitor is quietly eating your lunch.

Profound, Peec AI, Scrunch AI, Semrush One, and Ahrefs Brand Radar have all launched tooling for this in the last 12 months. The category is still immature and the benchmarks shift monthly, but you can get a usable baseline in an afternoon.

For smaller brands and solo operators, you don't actually need to pay for any of this. I've built my own version on top of the OpenAI API, Google's Natural Language API for entity extraction and sentiment scoring, BigQuery for storage, and Looker Studio for dashboards. Cheaper than SaaS, more flexible, and you own the data. I wrote about the full setup in Brand Monitoring on LLM AI Search, including how to use salience scores to identify the entities that AI systems actually associate with your brand.

2. Recover What GA4 Can Still Recover

Custom channel groups in GA4 built with regex patterns catch referrals from the platforms that do still pass headers. A starter regex applied to Session source looks something like .*(perplexity\.ai|chat\.openai\.com|gemini\.google\.com|copilot\.microsoft\.com|you\.com|phind\.com|searchgpt|claude\.ai).*. That won't catch everything (mobile apps and in-app browsers still strip referrers), but it catches enough to start a real trend line. Rename the channel “AI Referral” and you'll see it as a row in your Acquisition reports within 24 hours.

Server log analysis catches AI crawler activity directly. Look for user agents like GPTBot, ChatGPT-User, ClaudeBot, PerplexityBot, Google-Extended, and OAI-SearchBot. Crawl frequency on a given URL is a leading indicator that the content is being used in AI answers, usually with a 30 to 60 day lag before citation traffic shows up. If you aren't already piping server logs into BigQuery or Looker Studio, now is the time. A one-hour Screaming Frog crawl plus a basic Python script on your access logs will surface more AI crawler data than any GA4 report will.

UTM-tagging canonical URLs that are likely to get cited (pricing pages, comparison pages, definitive guides) helps attribute some of what's lost. It's not clean, but it's better than nothing.

3. Infer Influence From Secondary Signals

When direct traffic grows faster than branded search in Search Console, the gap is almost always AI-influenced dark traffic. When branded search volume spikes without a campaign to explain it, the cause is often AI citation. When conversion rates on direct traffic climb above the historical baseline (say from 3% to 5%), the excess is likely AI-informed visitors arriving pre-sold.

None of these are clean attribution. Together they outline the shape of influence GA4 can't see directly. Some agencies are formalizing this as a “Revenue Attribution Decay Model” that credits dark-funnel pipeline back to the channels that earned it. The typical output is a recalibrated ROAS figure meaningfully higher than what the dashboard reports. Imperfect, but closer to truth than pretending the missing data doesn't exist.

4. Change What Marketing Is Held Accountable For

The honest reframe is that the old question, “how much revenue did this channel generate”, is becoming unanswerable for the upstream half of the funnel. The question that replaces it is closer to “how present are we in the conversations where our category is being decided”. That's a brand and visibility question, not a last-click performance question.

The trade-off is that it requires senior stakeholders, CFOs, and boards to accept measurement built on triangulation and leading indicators rather than line-item causality. That conversation is harder than the technical implementation, especially with finance teams that have spent 15 years drilling “data-driven” into marketing. In-house SEOs have the worst version of this problem. SEO has always been a black box for most executives. They drive a high share of traffic, get taken for granted, and their leadership generally assumes the traffic would have come anyway had they done nothing. Now more than half of that traffic is also dark. Good luck.

5. Defend Your Position in the Corpus

AI systems cite heavily from a narrow set of high-authority domains. Pew's analysis of actual AI Overview citations found Wikipedia, YouTube, and Reddit as the top three sources. Semrush data on broader AI citations found Reddit accounting for 40% of generative AI citations worldwide, Wikipedia 26%, and YouTube 23%. If you aren't present on those platforms, you're structurally disadvantaged regardless of how well your own site ranks. A SaaS brand with a great blog, no Reddit presence, no YouTube channel, and no Wikipedia entry is invisible to about 90% of AI citation weight.

Practical work here includes earning third-party mentions on credible domains, seeding structured comparison data the models can extract (clean pricing tables, clear feature matrices, named competitors), and maintaining factual accuracy across the open web because AI models will synthesize whatever is out there. Princeton's 2024 GEO research (Aggarwal et al., presented at KDD 2024) quantified that specific content choices (citing primary sources, including statistics, embedding expert quotations) can improve inclusion rates in AI answers by up to 40%.

Final Thought

The collapse of click-based measurement is uncomfortable. It is not the end of measurable marketing. It's the end of one particular kind of measurable marketing, the kind that treated every conversion as the output of a traceable sequence of clicks. That era was shorter than it felt and was always an incomplete picture. Dark social, word of mouth, and offline influence have always existed outside the pixel. AI search has just made the invisible portion the majority of what drives buying decisions.

The uncomfortable part is that the performance-marketing muscle memory most digital teams were built on (optimize the click, attribute the conversion, scale the channel) is a weak match for a world where the decisive moment happens inside a conversation no one owns. Teams that rebuild around a wider definition of measurement (citations, mentions, share of model, branded demand signals, conversion-rate lift on unattributed traffic) will have a defensible read on what's working. Teams that wait for GA4 to start reporting AI Overviews cleanly will be waiting for a signal that may never arrive in a form they recognize.

The funnel didn't disappear. It moved somewhere the dashboard can't see. The work is to measure what you can't directly observe.

Sources

- Seer Interactive, AIO Impact on Google CTR: September 2025 Update

- Ahrefs, AI Overviews Reduce Clicks by 58% (December 2025 update)

- Pew Research Center, Do people click on links in Google AI summaries?

- Digital Content Next, Google's AI Overviews linked to lower publisher clicks

- Think with Google, The Messy Middle of Purchase Behavior

- Forrester, The Future of B2B Buying Will Come Slowly… and Then All at Once

- Forrester, State of Business Buying, 2026

- Gartner, 80% of B2B Sales Interactions Will Occur in Digital Channels by 2025

- Loamly, The AI Traffic Attribution Crisis

- Jasper, 2025 State of AI in Marketing

- Semrush, AI Overviews Study

- Aggarwal et al., Princeton / Georgia Tech / IIT Delhi / Allen Institute, GEO: Generative Engine Optimization, KDD 2024

- Search Engine Journal, Google AI Overviews Impact On Publishers

- AdExchanger, The AI Search Reckoning Is Dismantling Open Web Traffic

- The Digital Bloom, 2025 Organic Traffic Crisis: Zero-Click & AI Impact Report

- Search Engine Land, What is Generative Engine Optimization (GEO)?