Claude Code is an agentic command-line tool built by Anthropic that reads your local files, writes and edits code across a project, runs shell commands, and connects to external services through the Model Context Protocol (MCP). For software engineers it is a productivity multiplier. For SEOs and performance marketers, it is something stranger and more consequential: the first general-purpose tool that collapses the distance between identifying a problem and executing the fix in the same afternoon, without filing a ticket with the dev team.

I have been doing AI-augmented SEO work in Python for a while now. My Google Colab notebooks include a cosine-similarity content relevance scorer that ranks on-page passages by vector-embedding distance to a target keyword. I have a separate notebook that prompts ChatGPT and Gemini about my brand daily, extracts entities with Google Natural Language API, and writes the results to BigQuery for Looker Studio dashboards. I have used SQL against my Search Console BigQuery export to mine multi-word queries and cluster them into AI-Overview-prone topics. These workflows exist on this site if you want to read about them. None of this is new.

What is new is that Claude Code removes almost all of the friction that kept these workflows in Colab and off most SEOs' workstations. The honest truth is that most marketers will never open a Jupyter notebook, and most of the ones who will still won't schedule a notebook to run daily, manage its dependencies, or version-control the output. Claude Code does not require them to. They describe the outcome, Claude writes the script, runs it, and shows the result. That is the real shift.

I want to be clear about the scope of this piece. This is not another “here are 7 ways to audit your site” listicle. That content exists in abundance and I will link to the better examples at the bottom. What I want to do is explain what Claude Code actually is, why I think it matters more than the SEO industry currently realizes, and where it fits into a modern marketer's workflow based on the kind of work I have been doing over the last 20 years. If you stick with me to the end I will also cover where it falls short, because the hype around agentic coding is outpacing the reality in a few places.

What is Claude Code?

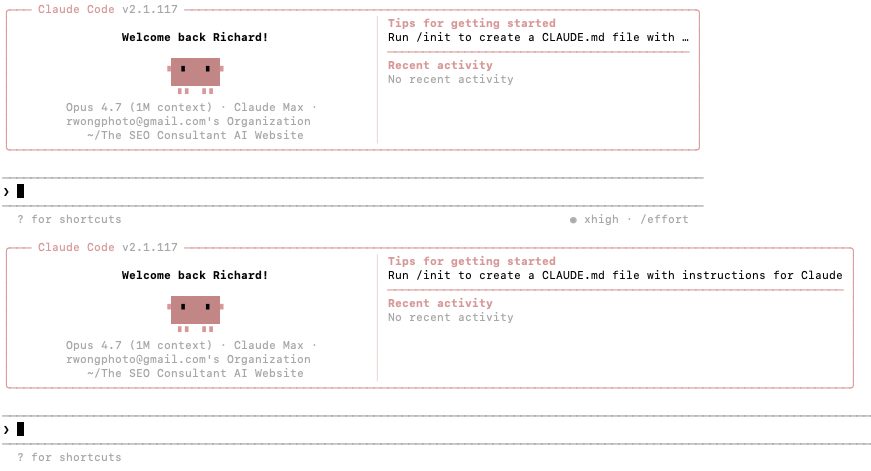

Claude Code is a terminal-first agentic coding tool. You install it, navigate into a project directory, type claude, and you are in a conversation with an AI agent that has read access to your files, write access if you grant it, and the ability to execute shell commands when you approve them. It runs on Anthropic's Claude model family (Opus 4.7 is the current flagship, Sonnet 4.6 is the workhorse, Haiku handles fast exploration work) and supports a 1 million token context window on Opus, which in practice means it can hold a substantial codebase and a month of analytics data in working memory at the same time.

That is the mechanical answer. The more useful answer is that Claude Code is what a competent junior analyst would be if the junior analyst could write Python fluently, never got tired, read documentation perfectly, and ran in parallel across multiple tasks. It plans, it executes, it verifies, it iterates. When a script it wrote fails a test, it reads the error, fixes the script, and reruns. That loop is the whole thing.

Claude Code is available as a terminal CLI, as plugins for VS Code, Cursor, Windsurf and JetBrains IDEs, on the web at claude.ai/code for long-running tasks, and on Anthropic's desktop app. It also ships with a headless mode (invoked with the --print flag) that lets you pipe it into other Unix tools or run it in CI pipelines. This composability is underrated. You can tail -n 200 server.log | claude -p "flag anomalies in Slack" and mean it literally. Stripe rolled out Claude Code to all 1,370 of their engineers as a pre-installed signed enterprise binary, and one team used it to complete a 10,000-line Scala-to-Java migration in four days, work originally estimated at ten engineer-weeks. That is the kind of specific outcome that makes the “AI is writing real code now” claim concrete instead of aspirational. Ramp engineers report processing over one million lines of AI-suggested code monthly, and Anthropic itself says the majority of code across the company is now written by Claude Code. These are not anecdotes. They are the new baseline.

Claude Code is not free. It requires an Anthropic subscription: Claude Pro (starts around $20/month), Claude Max (for heavier users, tiered higher), or Console/API billing if you want to pay per token. Installation is straightforward on Mac, Linux, and Windows (via WSL); Anthropic's official installer handles the setup in a few minutes, and there are signed enterprise binaries for organizations with procurement requirements. For SEOs evaluating the cost, a Pro subscription covers most solo consulting workloads. The compute cost only becomes meaningful when you are running heavy subagent work, scheduled routines, or large crawls, in which case API billing is the cleaner model because it scales with actual usage. See Anthropic's docs at docs.claude.com for the current install steps and subscription tiers.

How is Claude Code different from Claude.ai or ChatGPT?

This is the question I get most often from non-technical marketers, and the answer matters. Claude.ai and ChatGPT are chat interfaces. You type, they respond, you copy the output somewhere useful. Claude Code is an agent that acts inside your environment. The difference is not cosmetic.

In Claude.ai, if you ask for a Python script that pulls Google Search Console data and clusters queries by intent, Claude writes the script and you get a code block. You still have to copy it to your machine, install dependencies, authenticate with Google, run it, read the output, feed that output back into the chat if you want analysis, and repeat. That is four separate environments (browser, terminal, Python, back to browser) and at least a dozen context switches. In Claude Code, you describe the same outcome in plain English. Claude writes the script in your project folder, installs the dependencies itself (with your approval), runs the authentication flow, executes the script, reads the output file, and writes you a summary of what it found. Same Python, same APIs, but the friction is gone.

Cursor fills a middle ground here and many people use both. Cursor is a VS Code fork with AI built into the editing experience, which is great for writing and editing code inside the IDE. Claude Code runs deeper. It is the right tool when the task crosses many files, requires shell access, or needs to hand subtasks to parallel subagents. A lot of marketers run Claude Code inside Cursor, because Cursor's editor UI is more approachable than a raw terminal. That is the setup Will Scott described in his Search Engine Land piece and it is a reasonable starting point.

Claude Code vs Cursor vs Codex CLI

I get asked about this comparison constantly, so here is the short version.

| Tool | Interface | Best for | Primary model | Agent depth |

|---|---|---|---|---|

| Claude Code | Terminal CLI, IDE plugins, web, desktop | Multi-file tasks, subagent workflows, CI/CD, scheduled routines, MCP integrations | Claude Opus 4.7 / Sonnet 4.6 / Haiku 4.5 | Deep — plans, executes, iterates, verifies |

| Cursor | VS Code fork (IDE) | In-editor AI assistance, inline autocomplete, single-file and short-range multi-file edits | Multi-model (Claude, GPT, others) | Medium — strong in-editor, weaker on shell and long-running tasks |

| Codex CLI | Terminal CLI | Terminal-native agentic coding for teams committed to the OpenAI stack | GPT-5 and OpenAI model family | Deep — similar architecture to Claude Code, different model backbone |

Most of the developers I know who take agentic coding seriously use two of these together. The common pairing is Claude Code plus Cursor: Claude Code for the deep multi-file work and Cursor for the in-editor experience where you want visual diffs and inline edits. Codex CLI is the right pick if you are already on the OpenAI stack for other reasons and want a unified vendor relationship. On independent benchmarks across complex multi-file reasoning and instruction following, Claude's models have generally led in 2026 per my own testing and most third-party reviews, but the gap narrows and widens month to month, so test for your specific workflow rather than taking anyone's word for it (including mine).

For marketers specifically, Claude Code is the better default because the SEO and analytics workflows I described in this article depend heavily on MCP, subagents, and scheduled routines. Cursor is fine as a supplement. Codex CLI is fine if you have an OpenAI reason to use it. If you are starting from scratch and you want one tool, start with Claude Code.

Why does this matter for SEOs and marketers specifically?

Because SEO has always been bottlenecked by engineering capacity, and Claude Code removes the bottleneck.

Think about what SEO work actually involves at the technical end. You identify an issue: let's say your site has 4,200 product pages with duplicate meta descriptions, 600 pages throwing soft 404s, and a canonical tag implementation that is pointing cross-domain. You document the issue. You write a ticket. You justify it against the engineering team's roadmap. You wait. Several months later, if you are lucky and the business has a reason to prioritize your work, the fixes ship. Meanwhile the crawl budget is getting wasted, the pages are not indexing properly, and the traffic you should already have sits waiting on someone else's sprint.

I have lived this on both the in-house and agency side since 2008. At The Search Agency we worked on marketplace and real-estate sites where the gap between “we know what to fix” and “fixed and live” could be the entire client engagement. At Auction Technology Group, where I was responsible for eight marketplace sites including Proxibid, LiveAuctioneers, The-Saleroom, Bidspotter, and EstateSales.net, technical SEO required navigating multiple codebases with multiple engineering teams across multiple time zones. Even with a sophisticated operation, the ticket-and-wait model is the dominant mode of technical SEO execution for most companies.

Claude Code changes the unit economics of that work. If your fix can be expressed in code, and the code is one that a reasonable AI agent can write (which for SEO is almost all of them), you do not need to wait. You need to know what the fix is, write a good prompt, review the output, and open a pull request. The engineering team still reviews and ships, but you are no longer waiting for them to do the work. You are waiting for them to approve it.

That point of view is not entirely wrong to push back on. A thoughtful engineering manager would say, correctly, that shipping AI-generated code without careful review is how you end up with security vulnerabilities, test regressions, and unreadable spaghetti that nobody wants to maintain. Fine. The review still has to happen. What does not have to happen anymore is the weeks of waiting between the SEO identifying the fix and the code being written.

What does my current Claude Code SEO workflow look like?

Let me show you rather than tell you. I am going to work through a handful of specific workflows I either already run or am in the process of migrating from Google Colab into Claude Code. These are not hypothetical.

Workflow 1: Search Console data mining at scale

Google Search Console only surfaces 1,000 rows of query data in the UI. I have written about this before. If you export GSC to BigQuery (and you should, because every day you are not exporting is data lost forever once the 16-month window rolls forward) you have access to hundreds of thousands or millions of rows, and the underrated feature of an LLM is that it can cluster that data semantically in a way spreadsheets can't.

In Colab my workflow was: write a SQL query against my BigQuery dataset, export the result to a pandas DataFrame, pass the queries as a prompt to Gemini asking for cluster assignments, parse the response back into a DataFrame, and visualize with matplotlib or a dendrogram. That was a lot of glue code that I had to maintain across sessions. In Claude Code I describe what I want in one sentence: “Pull the last 30 days of search queries from my BigQuery GSC export where length is four words or more, cluster them by likelihood of triggering an AI Overview, and write a dendrogram to a PNG.” Claude writes the SQL, runs it, writes the Python, executes the clustering, saves the image, and summarizes. The prompt is the deliverable.

Workflow 2: LLM brand monitoring

I have written about this in depth elsewhere on the site. The setup is five Python scripts that prompt ChatGPT and Gemini about my brand, extract entities and sentiment using Google Natural Language API, store the responses in BigQuery, and render time-series charts of entity salience and sentiment scores over time. It is useful because in AI search what matters is not where your site ranks on a SERP. What matters is how the model describes your brand when asked, and whether that description drifts over time as the model's training data and retrieval behavior change.

Migrating this to Claude Code did three things. First, I no longer had to keep five Colab notebooks in sync. The whole flow is now one project directory with a CLAUDE.md file telling Claude what the project is and how the pieces fit together. Second, I can schedule the whole pipeline as a routine (a new Claude Code feature that runs on Anthropic's infrastructure so it keeps running when my computer is off), which is cleaner than the Google Cloud Scheduler setup I was considering. Third, when a prompt response comes back with an anomaly (for example, ChatGPT confusing me with Raymond Wong the Hong Kong film producer, which actually happened), I can ask Claude to dig into it in natural language instead of writing a new notebook.

Workflow 3: Cosine similarity content relevance

This one is from my February 2025 article on using vector embeddings to rank on-page content by cosine similarity. The idea is you generate embeddings for each paragraph of a page, compare them to the embedding of your target keyword, and sort your paragraphs from most relevant to least. The bottom 10% is where you focus your rewrites.

In Colab it worked. In Claude Code it becomes a reusable skill. Once I have written the script and tested it on one article, I save it as a skill (a markdown file with instructions that Claude automatically loads when it sees a matching trigger) and from that point forward any time I say “run cosine relevance on this URL” Claude pulls the page, extracts the text, runs the embeddings, ranks the passages, and flags the weak ones for rewriting. The effort to add this to my process went from “notebook I open when I remember” to “sentence I type without thinking about it.” That is the entire point of agentic workflows.

This cosine-similarity work is also the foundation of QueryDrift.com, a tool I built that analyzes your Google Search Console data to detect when the queries landing on a page have drifted away from what the page is actually about. It uses cosine similarity plus LLM validation to score drift per URL, surface topical gaps, flag semantic duplication across pages, and recommend specific content fixes. If you want the productized version of the workflow I just described, that is what QueryDrift does. Claude Code is how I build and iterate on QueryDrift itself. It is also how I build similar custom tooling for consulting clients whose problems are specific enough that a generic SaaS tool won't cut it.

Workflow 4: Technical SEO audits

Every SEO tool on the market does some version of a technical audit. Screaming Frog, Ahrefs Site Audit, Semrush, Sitebulb. They are good and I still use them. What Claude Code adds is the ability to write a custom audit that actually looks at the specific problems your site has, in the specific ways your CMS generates them, with the specific prioritization rules your business cares about.

A representative example: I run a custom Python auditor that crawls a target URL, checks title and meta lengths, counts H1-H6 occurrences, verifies canonical consistency, extracts JSON-LD schema blocks, pulls Core Web Vitals from PageSpeed Insights, and optionally diffs what Googlebot sees versus what Chrome sees (this catches cloaking and JS-rendering issues, both of which will hurt you if the page is rendering differently for bots). The output is a prioritized fix list with critical, important, and nice-to-have tiers. Claude Code runs all of that. It is the skill I use most often in my consulting work and I can have it running against a new client site in minutes instead of days.

Workflow 5: Content gap analysis with MCP

Model Context Protocol is, in my honest assessment, the more important half of the Claude Code story. MCP is an open standard that lets external services (Google Drive, Slack, Gmail, specialized SEO tools, your CRM) expose their functionality to AI agents through a consistent interface. Anthropic introduced it in November 2024. OpenAI adopted it in March 2025 across its Agents SDK and the ChatGPT desktop app. Google DeepMind's Demis Hassabis confirmed support for Gemini in April 2025. In December 2025, Anthropic donated the protocol to the Agentic AI Foundation under the Linux Foundation, with OpenAI and Block as co-founders and AWS, Google, Microsoft, Cloudflare and Bloomberg as supporting members. Roughly one year after launch, the ecosystem reportedly crossed 97 million monthly SDK downloads across Python and TypeScript. For SEOs what matters is that MCP turns every integrated service into a tool Claude Code can actually use, and that the standard is now genuinely cross-vendor rather than an Anthropic-only play.

I have the Infranodus MCP connected. Infranodus is a knowledge-graph tool that does entity extraction, topic clustering, content gap analysis, and SEO reporting by comparing a URL's semantic structure against the top Google results for a keyword. What used to require a tab in the Infranodus web app now happens inside Claude Code. I can say “compare my travel SEO consultant page to the top five organic results for 'travel SEO consultant' and tell me what entities I am missing,” and the MCP integration runs the analysis, returns the structured results, and Claude writes up a prioritized content-gap report. Same workflow used to take an hour of tab-switching and a careful read of the Infranodus output. Now it is three sentences.

If you are building an SEO stack in 2026 and Claude Code is part of it, the MCP connections you choose are more consequential than which agent you run. The agent is commodity. The tools are the differentiator.

Workflow 6: Schema markup and structured data at scale

Structured data (JSON-LD schema) is one of the higher-leverage technical SEO investments you can make. It helps search engines parse your content correctly, it is one of the few inputs that genuinely influences AI Overview and generative-search citation eligibility, and it is tedious to implement and maintain across a large site.

The usual problem: you have 5,000 pages, maybe a dozen of them have correct Product or Article schema, the rest either have nothing or have auto-generated schema from a CMS plugin that is technically valid but semantically wrong. You can pay for an enterprise schema platform. You can also run Claude Code with subagents in parallel. Claude Code supports spawning multiple instances that work on different parts of a task simultaneously. One subagent crawls the URL list and classifies each page by type (Product, Article, LocalBusiness, FAQ, HowTo, Event). Another inspects existing schema and flags errors, missing required fields, and mismatches against Schema.org specs. A third generates new JSON-LD for pages that need it, using the page's actual content rather than template placeholders. A fourth validates the output against Google's Rich Results Test requirements. The deliverable is a pull-request-ready set of schema changes with a validation report attached.

I would argue this is where agentic SEO genuinely outperforms human execution, not because a human could not do the analysis, but because no human is going to do it for 12,000 URLs on a Wednesday afternoon with the same care they would give to the top 50 money pages. Schema is also a place where small amounts of machine-generated code can translate into meaningful rich-result eligibility gains, which is a real business outcome and one of the few SEO levers where the measurement loop is relatively clean.

Workflow 7: Scheduled routines for ongoing monitoring

The last workflow is the one I am most excited about and the one most SEOs haven't tried yet. Claude Code supports scheduled routines that run on Anthropic's infrastructure, meaning they continue running when your local machine is off. For SEO this means you can schedule:

- A daily crawl of your top 500 URLs to flag status-code changes, indexability regressions, and schema breakage.

- A daily LLM brand-monitoring sweep that appends to your BigQuery table and alerts you if sentiment drops below a threshold.

- A weekly competitor SERP monitor that tracks feature changes (AI Overviews appearing, new SERP features, competitor content updates) for your priority keyword set.

- A monthly technical regression test that re-runs your full audit and diffs against last month to show what got better, what got worse, and what broke.

Any one of these is a Screaming Frog macro or a SaaS tool subscription. All of them together is an SEO operations layer that would cost thousands of dollars a month in tooling. Claude Code charges for compute, and the compute is not free, but the order-of-magnitude economics favor building.

Workflow 8: ICP persona research at scale

B2B marketing lives and dies on understanding your ideal customer profile. I wrote about this in my B2B SEO post (specifically the section on demonstrating expertise and warm-lead generation). The traditional approach to ICP research involves interviewing ten customers, reading through sales call transcripts, and synthesizing the patterns into a persona deck. That work is essential and it is also slow enough that most marketing teams do it once, maybe twice, and then never update it as the customer base evolves.

Claude Code collapses this into a project-directory workflow. You drop the raw materials into folders: customer interview transcripts, Gong or Chorus call recordings transcribed to text, support tickets exported from Zendesk, CRM segment exports from Salesforce or HubSpot, customer survey responses, inbound sales emails, churn-interview notes. Claude Code can then run subagents in parallel across the whole corpus. One subagent extracts pain points by customer segment. Another clusters objections by deal stage. A third identifies buying triggers and the language customers use to describe them. A fourth cross-references objection language against your existing website copy to flag the mismatch between what prospects actually say and what your site tells them.

The output is not a static persona deck. It is a living document you update every week by dropping new transcripts into the same folder and rerunning. For a B2B company with a clear ideal customer profile and a steady stream of sales conversations, this is probably the highest-leverage use of Claude Code in marketing that I can think of. It is also the workflow that benefits most from the 1 million token context window on Opus 4.7, because you can load months of conversation history into a single pass without losing fidelity.

The direct SEO tie-in: once you know what your ICP actually cares about in their own words, your content strategy writes itself. Keyword research stops being “what has search volume” and starts being “what is my ideal customer actually asking.” That is a meaningfully better brief for content, for landing pages, and for the way you describe your services.

Workflow 9: Ad-hoc data analysis across disconnected sources

Most interesting SEO and marketing questions cross data sources. “Which keywords am I paying for in Google Ads that I already rank organically in the top 3?” combines Search Console data with Google Ads search-term reports. “Which of my blog posts get organic traffic but have zero conversions?” combines GSC with GA4 events. “Which competitor pages outrank me where my page has better Core Web Vitals?” combines SERP data, Lighthouse data, and your own crawl output. “What topics does my content cover that my ICP never actually mentions in sales calls?” combines your site's semantic graph with your CRM's call transcript corpus. No single SEO tool answers these questions well. Spreadsheets technically can but in practice the work takes a Thursday afternoon, and most of the time the question never gets answered because it is not worth a Thursday afternoon.

Claude Code handles these questions conversationally. If you have the source data either in BigQuery, in CSV exports, or accessible through an MCP-connected service, you can ask the question in plain English and get back a joined analysis with the underlying query shown so you can verify it. That verification step matters and I cannot stress it enough. I have personally seen Claude Code confidently report a number that did not match the underlying JSON file. It happens rarely but it happens, so spot-checking against the raw data is part of the discipline. Trust but verify, especially before anything goes to a client.

The broader implication is that the analyst role in a modern marketing team shifts. It was “the person who writes the queries and builds the dashboards.” It is becoming “the person who asks the right questions, verifies the answers, and decides what to do about them.” I would argue that is the more valuable role anyway. The SQL was never the hard part.

The skill that matters now is not coding. It is knowing what workflows to build. The tools just caught up.

What about marketers who don't code at all?

This is the right question and I want to answer it carefully, because the honest answer is in two parts.

The first part is that Claude Code works in plain English. You do not need to write Python to use it. You do need to be able to describe what you want precisely, which is a different skill than coding and one that many marketers already have from years of writing briefs and specifying campaigns. The barrier to entry is lower than most marketers assume. Will Scott's piece for Search Engine Land makes this point well: he runs a digital marketing agency, describes himself as not a developer, and uses Claude Code inside Cursor to pull Google Search Console, GA4, and Google Ads data every day.

The second part is that you still benefit from understanding the shape of what Claude is doing. If Claude writes a SQL query for you and the query returns a number that looks wrong, you need to be able to spot that the number is wrong. LLMs hallucinate, and that includes hallucinating data analysis. I have personally seen Claude Code confidently report a metric that did not match the underlying JSON. It is rare but it happens, and you should treat Claude's output like you would treat work from a new analyst: trust but verify, especially before anything goes to a client.

With that said, a marketer who is willing to invest 20 hours in learning how to prompt Claude Code effectively, verify its output, and manage a simple project directory will end up producing more and better-quality analytical work than a marketer who outsources the same questions to a SaaS tool. The lower-bound output floor of Claude Code is already above most tool outputs. The upper-bound ceiling is much higher.

The MCP Ecosystem and Why It Matters More Than the Model

I want to spend another minute on Model Context Protocol because I think most of the existing “Claude Code for SEO” articles undersell it.

MCP is a standardized way for AI agents to connect to external services. Before MCP, every integration was bespoke. If you wanted Claude to read your Google Drive, someone had to write a wrapper. If you wanted it to talk to your CRM, another wrapper. With MCP, a service exposes itself once through the protocol and any MCP-compatible agent can use it. Anthropic introduced the standard and has since been joined by OpenAI, Google DeepMind and most of the agent-tool ecosystem. That network effect is what makes MCP the more durable part of the story.

For SEOs, the MCP servers worth knowing about right now include:

- Infranodus: Entity extraction, topic clustering, content gap analysis, SEO reporting. This is the one I use most.

- Google Drive, Gmail, Google Calendar: Native Anthropic connectors for personal and operational context.

- GA4 (via community MCPs): Query real analytics data in natural language, skip the GA4 UI's genuinely frustrating report builder.

- Google Search Console (via community MCPs): Query your GSC data directly, layered on top of the BigQuery export if you have one.

- Notion, Slack, Linear, Asana: Operational MCPs for connecting Claude to your project management and communication stack.

- Stripe, HubSpot, Salesforce: Go-to-market MCPs if you need marketing-attribution context.

The strategic move for an in-house SEO team in 2026 is to pick the MCPs that match your stack, configure them once in a shared .claude directory at the project level, commit that config to your repo, and onboard every team member into the same environment. That is the foundation. Everything else is workflows built on top.

What Claude Code Doesn't Do Well

I want to be honest about the limits, because this is where most of the AI content on the topic gets lazy.

It is not a replacement for Screaming Frog, Ahrefs, or Semrush. What those tools give you that Claude Code does not is cumulative historical data, preprocessed SERP intelligence, and curated link indexes. You cannot replicate ten years of backlink crawling with a weekend project. You can, however, query and combine data from those tools in ways their dashboards do not support natively. Use Claude Code to add analytical depth on top of your SEO stack, not to replace it wholesale.

It is not a ranking tracker. The GEO and AI-visibility tracking space is still immature and you should not trust any single tool (Claude Code included) to tell you definitively how you are performing in AI search. The data from AI citation tools is directionally useful but not yet rigorous. If anyone sells you a Claude Code workflow that replaces dedicated rank tracking, be skeptical.

It will hallucinate. I have already said this but it is worth saying twice. Verify numbers against the source data. Spot-check summaries. If something looks too clean, open the raw file.

It is not cheap at scale. Claude Code requires a Claude subscription (Pro, Max, or Console/API billing) and heavy agentic usage burns tokens quickly. A technical SEO audit on a small site is cheap. A subagent-heavy crawl of 50,000 URLs with full content analysis is not. Budget for this the way you would budget for a paid-media campaign: with a testing period, a scaling plan, and an ROI benchmark.

It does not replace human SEO judgment. Anyone who has worked in SEO knows the difference between a technically correct recommendation and a strategically correct one. A 301 redirect that is technically valid can still be the wrong call for the business. Claude Code will execute whatever you tell it to. You still have to tell it the right thing.

What Does This Mean for SEO Agencies and In-House Teams?

My honest view is that the SEO industry is in the middle of a workflow shift with no obvious historical analog, and the pace is outrunning most agency leadership. The data supports this. Stripe deployed Claude Code to 1,370 engineers with a zero-configuration enterprise binary. Rakuten reduced their average feature delivery time from 24 working days to 5 after integrating Claude Code into their development workflow. An independent architecture study of Claude Code that cites Anthropic's internal survey of 132 engineers found that roughly 27% of Claude Code-assisted tasks were work that would not have been attempted without the tool at all. That last number matters the most. Claude Code is not just a productivity multiplier for existing work. It is a change in what work is economically worth doing.

Agencies whose value proposition is “we have access to tools and we have analysts who know how to use them” are going to have a hard time. The tools are getting commodified and the analysts are getting augmented by agents who work for them. If an agency's pitch is “we'll build you a monthly technical audit deck,” that pitch has a shelf life measured in quarters. The clients who know how to use Claude Code will ask why they are paying $3,000 a month for something they can run in an afternoon.

Agencies whose value proposition is strategic (we understand your business, we know the industry, we have relationships, we bring judgment to the work) will be fine. Maybe better than fine, because the operational overhead of actually executing SEO work is about to fall dramatically and strategic advice becomes the higher-margin part of the engagement.

In-house teams should be thinking about this too. If your SEO function is three analysts running the same weekly reports and making the same recommendations to the same engineering team, that function is going to get compressed. If your SEO function is a senior strategist with a Claude Code stack, an MCP-connected analytics environment, and the ability to prototype and ship technical fixes without waiting on engineering, that function is going to look like the future and get funded.

The skill that matters now is not coding. It is knowing what workflows to build. I would argue that is what always separated good SEOs from average ones. The tools just caught up.

Where Is This Going?

A few predictions, offered with the standard caveat that the pace of change in this space makes any prediction beyond 12 months mostly a vibe.

Over the next year I expect Claude Code and its peers (OpenAI's Codex CLI, Google's equivalent, Cursor as an adjacent tool) to become the default analytical shell for senior marketers the way Excel was the default for the previous generation. Not every marketer, but the ones operating above a certain level of technical fluency, which is a larger group than current adoption suggests.

I expect the SEO tool vendors to either ship first-party MCP servers for their data (Ahrefs and Semrush both have natural incentives here) or face increasing customer pressure to do so. The ones that move early will lock in meaningful integration moats. The ones that hold back on principle will discover that customers will build their own workarounds against whatever API surface exists.

I expect a meaningful portion of “SEO tool” spend to migrate from SaaS subscriptions to compute spend on agent runs, and I expect SaaS vendors to respond by bundling agent workflows into their offerings. Some of them will do this well. Most won't, because the kinds of teams that build tools for a living are structurally different from the kinds of teams that build agentic workflows.

And I expect the next wave of in-house SEO hires to be expected to operate Claude Code (or equivalent) as a baseline skill, the way we expect analysts to know Excel and SQL today. If you are hiring for SEO in 2027, this will be on the interview rubric.

The Brass Tacks

Claude Code is not hype. It is the most significant tooling change in performance marketing in at least a decade. The SEO industry has seen many new tools marketed as “game-changers” (a phrase I avoid because it usually isn't true, and also because AI writing tools overuse it) and most of them weren't. This one actually is. Not because it does anything humans cannot do, but because it collapses the time and friction between knowing what to do and actually doing it, which is the real constraint on operational SEO work.

If you are running an SEO or performance marketing function in 2026 and you are not yet using Claude Code (or a credible equivalent) daily, that is a gap worth closing this quarter. Not next quarter. The compounding advantage of being three months ahead on this curve is real, and the cost of entry is low enough that there is no good reason to wait.

References and Further Reading

Claude Code and adoption data:

- Anthropic, Claude Code overview: anthropic.com/product/claude-code

- Stripe customer story at Anthropic (1,370 engineers, Scala-to-Java migration): claude.com/customers/stripe

- Ramp customer story at Anthropic (1M+ lines/month, ~50% weekly engineer usage): claude.com/customers/ramp

- Dive into Claude Code: The Design Space of Today's and Future AI Agent Systems (cites the 132-engineer internal Anthropic survey; 27% new-workflow stat): arxiv.org/html/2604.14228v1

Model Context Protocol:

- Wikipedia, Model Context Protocol: en.wikipedia.org/wiki/Model_Context_Protocol

- Pento, A Year of MCP: From Internal Experiment to Industry Standard: pento.ai/blog/a-year-of-mcp-2025-review

- The New Stack, Why the Model Context Protocol Won: thenewstack.io/why-the-model-context-protocol-won

Claude Code for SEO and marketing (the existing coverage):

- Will Scott, How to turn Claude Code into your SEO command center, Search Engine Land: searchengineland.com/claude-code-seo-work-470668

- Animalz, Claude Code for Content Marketers: animalz.co/blog/claude-code

- Synscribe, 7 Ways to Automate SEO Workflows with Claude Code: synscribe.com/blog/seo-automation-using-claude-code

- Stormy AI, Claude Code for Marketers: A Technical SEO Guide to Automated Site Audits: stormy.ai/blog/claude-code-technical-seo-guide

My prior posts on this site:

- LLM brand monitoring on AI search

- Search query data mining with BigQuery

- Using AI to optimize SEO content relevance with cosine similarity — the foundation of QueryDrift.com