I’ve been on both sides of the page audit deliverable. I’ve handed a 200-row spreadsheet to a stakeholder and watched their eyes glaze over. I’ve also been the in-house person on the receiving end, staring at a list that flagged every URL for something — thin content, missing H2, slow LCP, title too long, title too short, internal links not optimal — and trying to figure out what to actually do on Monday.

The honest answer is, usually, nothing strategic. You finish reading the recommendations and you have a to-do list, not an order of operations. You start at the top of the spreadsheet because there’s no better signal for where to start.

The diagnostic problem is right there in the structure of the audit. Page-level audits treat each URL as an island. The fact is, pages don’t compete for traffic. Topics do. And most of what gets flagged as “low-quality content” in a site-wide audit isn’t really a content quality problem at all. It’s query drift.

What Is Query Drift?

Query drift is the gap between what a page is ranking for in Google and what the page is actually about. It’s measurable:

drift = 1 − cosine_similarity(page_embedding, query_embedding)Three thresholds matter operationally:

- 0.00 to 0.20: Aligned. The page is ranking for what it’s about. Reinforce it.

- 0.20 to 0.40: Soft Drift. Close to its queries, but not on the nose. Improve relevance.

- 0.40 and up: Divergent. The page is ranking for the wrong thing. Usually a “create new content” signal, not a “fix this page” signal.

Drift happens for ordinary reasons. Search intent shifts under your feet. You wrote about X but earned links for Y. Internal linking pushed authority to a tangential page that wasn’t built for the query it now ranks for. An AI Overview reframed the SERP and your page is showing up for queries it was never written to answer.

It matters more now than it did a few years ago because Google’s evaluation has changed. With AI Overviews and embedding-based retrieval, the system increasingly grades semantic fit, not keyword match. A drifting page used to coast on links and exact-match usage. The coasting is over.

Why Drift Compounds Site-Wide

The mechanism behind why drift hurts you comes down to two signals that interlock, and both of them shifted in Google’s favor over the last couple of years.

The first is NavBoost. Pandu Nayak’s DOJ testimony and the 2024 Content Warehouse leak both confirm that Chrome clickstream feeds a per-query re-ranking layer — good clicks, bad clicks, last-longest-click. A drifting URL ranking for an off-topic query earns weak engagement. NavBoost demotes that URL for that query. Over time the impressions disappear. That’s the local feedback loop, and it’s mostly self-correcting on a per-page basis.

The second is harder to escape. Site-level signals like siteFocusScore and siteRadius (from the same leak) and the helpful content classifier (now folded into the core algorithm) both grade the concentration of what your site is about. A site full of accidental rankers with thin engagement reads as dispersed and unfocused. The classifier doesn’t just demote the offending page. It can drag the whole site down, including the pages you actually want to win.

That’s the part most audits miss. A divergent URL isn’t just failing to rank for queries it shouldn’t be on. It’s actively diluting Google’s model of what your site is, which costs you on the topics you do want to own. Off-topic thin content is a tax on the cluster you’re trying to build. Pruning it is strategic work, not cosmetic.

Why Page-Level Audits Miss the Big Picture

A page audit answers a useful but local question: is this URL aligned with the queries it’s ranking for? Useful, sure. But there are things a page-level audit cannot tell you no matter how thorough it is.

It cannot tell you which topic owns the site. A page can be perfectly self-consistent and still be a topic the site has no business ranking for. If the homepage says you’re a B2B data science consultancy and you have a beautifully optimized page about home staging, that page can score 95 on a page audit and still be irrelevant to the actual business.

It cannot tell you which URL is the right canonical for a topic. Five pages partially covering “attribution modeling” all look fine on their own. Together, they’re cannibalization. The page audit doesn’t see the cluster.

And it cannot tell you what’s missing. Page audits only score what already exists. They’re blind to topic gaps by design.

The compounding cost is months of tactical work that doesn’t move the cluster. You spend a quarter implementing per-page fixes when the real problem is topic dispersion. The site gets a little tidier and the rankings don’t move. I’ve seen this play out enough times that I stopped trusting the page-first audit as a starting point.

What Is Topic-First SEO?

Topic-first SEO is the mental shift from auditing URLs to auditing the clusters your own Google Search Console data reveals. The unit of analysis is the topic, not the page. That’s the whole reframe.

The real questions stop being “is this page aligned with its queries” and become: what topics is the site actually known for, per Google’s behavior, not per your sitemap? For each topic, which URL is the pillar, which are supporters, and which are accidental rankers ripe for consolidation? And where is the site closest to the homepage’s stated identity vs. where has it wandered into territory it can’t defend?

Page-level audits miss the big picture because they take the page as the unit of analysis. The unit should be the topic. Once you accept that, the rest of the audit flow inverts.

The classical version of this idea is topic clustering for content marketing, which builds the cluster on the way in — planning regional hubs, supporting articles, internal links from day one. Topic-first auditing is the same idea on the way out: working backward from the cluster Google has actually decided you own, and reconciling it against the cluster you intended.

How QueryDrift Implements Topic-First Auditing

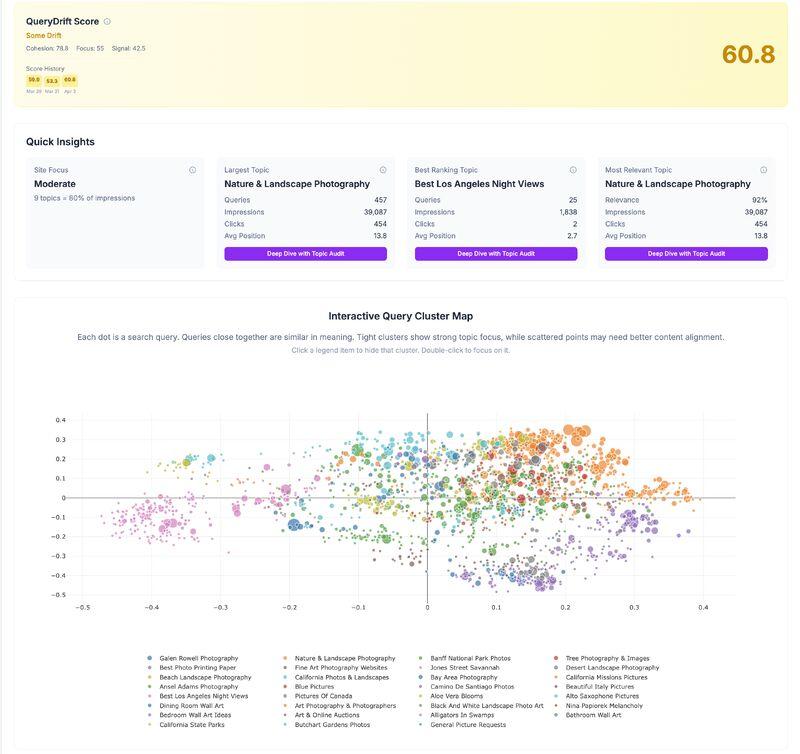

I’ve been building a tool with Grant Simmons called QueryDrift that runs this workflow end to end.

A note on the architecture: v1.0 of QueryDrift shipped with a standalone Page Audit as a separate product. We removed it. Page-level recommendations live inside Topic Audit now, where they inherit the site’s topic strategy as context. That re-architecture is the same argument this article is making. A page audit produced in isolation produces recommendations divorced from strategy. The page work should fall out of the topic work, not run in parallel to it.

Two product surfaces today.

Site Audit: What Is My Site Actually About?

The Site Audit (we also call it SiteDrift) is the strategic view. It pulls the property’s top 5,000 GSC queries and embeds them with BAAI/bge-small-en-v1.5, then clusters with HDBSCAN.

The choice of HDBSCAN over KMeans is deliberate. KMeans forces every query into a bucket, which means it invents topics that aren’t really there. HDBSCAN is density-based, so noisy queries fall out as unclustered. The output is the topic structure that actually exists on the site, not a tidy fiction.

Gemini labels each cluster with a 2 to 5 word topic name. Each cluster then gets a relevance score from 0 to 100 against a homepage-anchored reference centroid:

reference_centroid = 0.3 × homepage_embedding + 0.7 × site_centroidIn plain language: how close is this topic to what your homepage claims you do? The output is a map of the site’s actual topic portfolio, ranked by impressions, clicks, and relevance to identity. That’s the strategic view a page audit cannot produce.

Topic Audit: Who Owns This Topic, and What to Do About It

Pick a topic from the Site Audit. Topic Audit fetches GSC data at query and page granularity, drops everything below 0.50 cosine similarity to the topic, then aggregates the surviving queries by landing URL.

The 0.50 cutoff is a relevance gate, and it matters more than it sounds. Without it, a URL gets credit for queries that just happen to share an adjacent word. With it, only queries that are genuinely on-topic count toward a URL’s score. Each URL gets ranked by mean topic alignment.

Pillars surface. Supporters surface. Accidental rankers surface. That’s the cluster-level decision layer where consolidation, internal linking, and “which page should we double down on” decisions actually get made. At the level those decisions are real.

Topic Audit then produces per-URL recommendations on top of that strategic context. The page is embedded as a weighted blend of structured content, weighted the way Google likely sees it:

- Meta title: 3×

- H1: 3×

- H2s: 2×

- Body: 1.5×

- Image alts: 1×

Every GSC query for the URL is scored against the 0.20 and 0.40 drift thresholds and bucketed into Reinforce, Improve, or Create New. Gemini handles the per-query interpretation, but with the cluster’s topic strategy as input. That’s the difference. The recommendations know what the cluster is supposed to be, not just what the page currently is.

The Site → Topic Workflow

The order is the whole point. Run the audit in this sequence:

- Site Audit to map the real topic portfolio.

- Topic Audit on the three to five clusters that matter most for revenue. You get the URL-level prioritization (pillars, supporters, drifters) and the per-page recommendations to act on, both grounded in the cluster’s topic strategy.

This inverts the usual SEO audit flow, which starts at the page and never reaches strategy. Topics compete. Pages don’t. The audit should reflect that.

What Changes When You Adopt Topic-First Thinking

Audits start producing prioritized decisions instead of decorated to-do lists. “Consolidate these three URLs into the pillar” is a decision. “Add an H2 here” is a task. The first one moves the cluster. The second one moves nothing.

AI Overview performance stops looking random. Clusters with high homepage-anchored relevance and tight cluster cohesion are the ones that survive AI summarization. Loose clusters with weak relevance to the homepage’s identity get dropped. Once you can see that picture, AI Overview behavior is no longer a mystery — it’s a predictable function of how concentrated your topic structure is. AI Search Broke the Funnel covers the upstream context on why this matters more now than it used to.

Internal linking finally has a defensible logic. Pillars get the links. Drift candidates get fewer. The link structure starts reflecting the topic structure instead of historical accident.

And you stop confusing “the page is fine” with “the page is in the right cluster.” Those are two different questions and only one of them is strategic.

Page Audits Belong Inside a Topic Frame

Page-level work isn’t going anywhere, and it shouldn’t. It’s the right tool for the moment you’ve already decided which URL deserves the work. Reinforcing a pillar, fixing a soft-drifting page, pruning a divergent one. All of that is page-level work.

The problem isn’t the page-level work. The problem is starting with it. When the page is the entry point, every URL gets equal weight and the audit produces a to-do list instead of a strategy.

The reframe is site, then topic. The Site Audit tells you which clusters matter. Topic Audit tells you which URL owns each cluster, and produces the page-level recommendations on top of that strategic context. The page-level work earns its keep because it inherits the topic view’s decisions, not because it runs alongside them.

A page-level audit asks: is this page aligned with its queries? A topic-first workflow asks the bigger question first. Is the site aligned with its topics?

If you want to try this workflow, run it in order. Site Audit first. Topic Audit on your highest-revenue clusters, which delivers both the URL-level prioritization and the per-page recommendations to act on. That sequence is what produces decisions instead of findings.